Instead, we might save the qeury results to a newĭatabase that is more appropriate for downstream work. However, we might avoid doing this if the database is anĪuthoritative source (potentially version controlled) which should notīe modified by users. Instead of performing the query themselves, particularly if it is Potentially data corrections) are likely to be required by many it mayīe efficient for one person to perform the work and save it back to theĭatabase as a new table so others can access the results directly If the database is shared with others and common queries (and 2) Marichyasana answer uses Create table which if you run second time will not work because table is already created, you can replace 'create table' query with 'create table if not exists' check documentation here. connect( "data/portal_mammals.sqlite") # Read the results into a DataFrame df1 = pd.read_sql_query( 'SELECT surveys.year,ot_type,species.genus,species.species,x \ FROM surveys INNER JOIN plots ON ot = ot_id INNER JOIN species ON \ surveys.species = species.species_id WHERE surveys.year>=1998 AND surveys.year<=2001 \ AND ( x = "M" OR x = "F")') df1.to_sql( "New Table 1", con, if_exists = "replace") # We already have the 'df' DataFrame created in the earlier exercise df.to_sql( "New Table 2", con, if_exists = "replace") # Close the connection con.close() 1) Table name will be the name of sql table you want to push data to, conn will be the database connection. PYTHON #Connect to the database con = sqlite3. Those survey results for 2002, and then save it out to its own table so We first read in our survey data, then select only Here, we re-do an exercise we did before with CSV files using We can also use pandas to create new tables within an SQLiteĭatabase. Storing data: Create new tables using Pandas We read the file by using the csv.DictReader constructor with the file fin. And then we get the items that we want to write to the DB into a list of tuples with todb (i'col1', i'col2') for i in dr. Select from application where candidateId'id retrived from previous query result'. We read the file by using the csv.DictReader constructor with the file fin. Using a column 'Id' of the candidate record retrieved, run a query on application.csv. Dump the results into Candidate-filtered.csv. I have also tried PostgreSQL for this, though found it slower than pandas+sqlite for some reason. That is because the duckdb module uses a shared global database – which can cause hard to debug issues if used from within multiple different packages. Currently I am using Python and Pandas to automate the process of creating a SQLite database and inserting the data into it. Note that if you are developing a package designed for others to use, and use DuckDB in the package, it is recommend that you create connection objects instead of using the methods on the duckdb module.

The only difference is that when using the duckdb module a global in-memory database is used. The connection object and the duckdb module can be used interchangeably – they support the same methods. show () # the context manager closes the connection automatically sql ( "INSERT INTO test VALUES (42)" ) con.

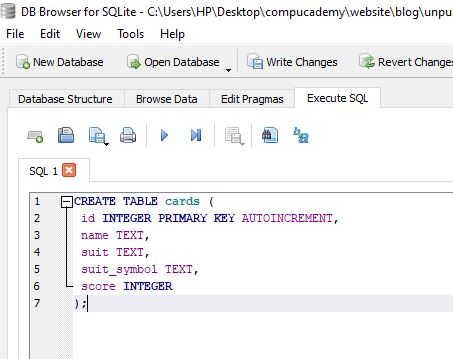

sql ( "CREATE TABLE test (i INTEGER)" ) con.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed